Decomposing Node.js Monoliths with SQS

Rishav Sinha

Author

We've all been there. The legacy monolith: a single, massive codebase that holds years of business logic, bug fixes, and technical debt. Deployments are terrifying, onboarding is a nightmare, and a single bug in the user profile module can take down the entire payment processing system. It's time for a change, but a full rewrite is often a fantasy.

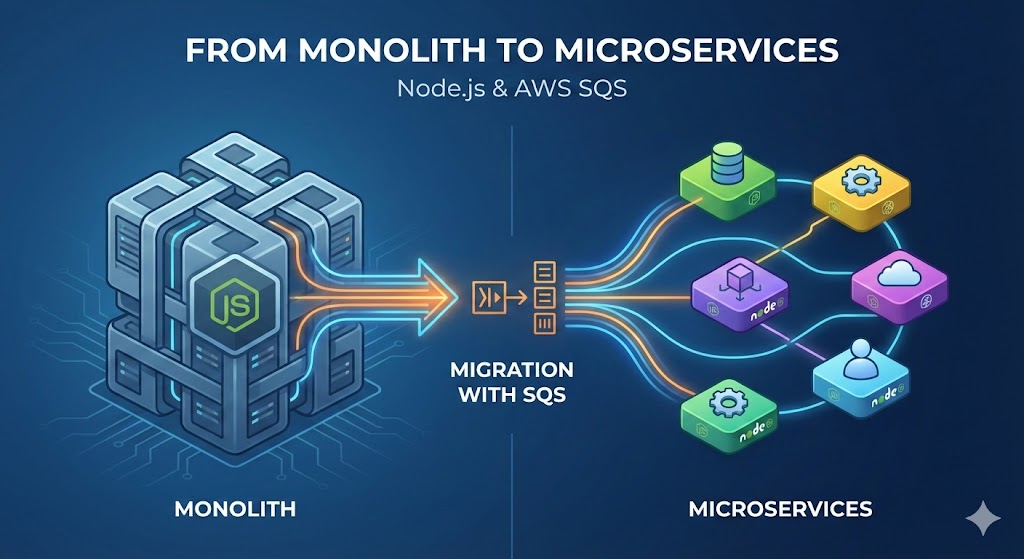

In this post, I'll walk you through a pragmatic, battle-tested approach to decomposing a feature from a legacy Node.js monolith. We'll use the Strangler Fig pattern, AWS Simple Queue Service (SQS), and event-driven architecture to safely carve out functionality piece by piece, without a "big bang" rewrite.

Identifying the Bounded Context

First, we need to find a "seam" in our monolith. In Domain-Driven Design, this is called a "Bounded Context" , a part of the application with its own distinct logic and data. A perfect candidate is often a non-critical, yet high-volume, operation like sending notifications.

Let's look at our legacy OrderService. It's a classic example of a God object that does way too much in a single, synchronous function call.

// monolith/services/OrderService.js

// DANGER: Tightly coupled and not resilient. What if Mailchimp is down?

async function processOrder(orderId, orderDetails) {

try {

// 1. Process payment via Stripe

const payment = await stripe.charges.create({ ... });

await Order.update({ orderId }, { status: 'PAID', paymentId: payment.id });

// 2. Update inventory

await InventoryService.decrementStock(orderDetails.items);

// 3. Send order confirmation email (OUR TARGET!)

await mailchimp.messages.send({

to: orderDetails.customerEmail,

subject: 'Your order is confirmed!',

// ...email body

});

// 4. Trigger fulfillment

await FulfillmentService.shipOrder(orderId);

return { success: true, orderId };

} catch (error) {

console.error(`[OrderService] Failed to process order ${orderId}:`, error);

// Potentially roll back transactions... a complex problem for another day.

throw new Error('Order processing failed.');

}

}

The problem here is clear: the mailchimp.messages.send call is synchronous. If the Mailchimp API is slow or down, our entire processOrder function hangs, and the user gets a spinning loader. This is a perfect piece of functionality to decouple.

The Strangler Fig Pattern: Our Guiding Light

Instead of rewriting OrderService, we'll "strangle" it. The pattern involves wrapping the old system with a new one, gradually redirecting traffic until the old system is no longer needed. Our "new system" is an event published to a queue.

First, let's modify the monolith to publish an ORDER_CONFIRMED event instead of calling the email service directly.

// monolith/services/EventPublisher.js

import { SQSClient, SendMessageCommand } from "@aws-sdk/client-sqs";

const sqsClient = new SQSClient({ region: "us-east-1" });

const queueUrl = process.env.NOTIFICATION_QUEUE_URL;

// A simple, reusable publisher

export const publishOrderConfirmedEvent = async (payload) => {

if (!queueUrl) {

throw new Error("NOTIFICATION_QUEUE_URL is not set.");

}

const command = new SendMessageCommand({

QueueUrl: queueUrl,

MessageBody: JSON.stringify(payload),

MessageAttributes: {

EventType: {

DataType: "String",

StringValue: "ORDER_CONFIRMED",

},

},

});

try {

const data = await sqsClient.send(command);

console.log(

`Event ORDER_CONFIRMED published with MessageId: ${data.MessageId}`

);

return data;

} catch (error) {

// A robust system would have retries or circuit breakers here

console.error("Failed to publish event to SQS:", error);

throw error;

}

};

@aws-sdk/client-sqs: We're using the modern, modular AWS SDK v3.SendMessageCommand: This is the command pattern used by the v3 SDK, which is great for type safety with TypeScript.MessageAttributes: I'm a huge advocate for using message attributes. It allows consumers to route or filter messages based on metadata without having to parse the entireMessageBody.

Now, we update the original processOrder function:

// monolith/services/OrderService.js (Updated)

import { publishOrderConfirmedEvent } from "./EventPublisher";

async function processOrder(orderId, orderDetails) {

// ... (Payment and Inventory logic remains the same)

// 3. Send order confirmation email (REPLACED!)

// OLD: await mailchimp.messages.send(...);

// NEW: Publish an event and move on.

await publishOrderConfirmedEvent({

orderId,

customerEmail: orderDetails.customerEmail,

customerName: orderDetails.customerName,

});

// ... (Fulfillment logic remains the same)

return { success: true, orderId };

}

The monolith's responsibility now ends with publishing a message. The processOrder API call returns to the user instantly, and the email will be sent asynchronously by a different system.

Provisioning Our Queue with Terraform

I never create production infrastructure by clicking in the AWS console. It's not repeatable, auditable, or scalable. We'll define our SQS queue and its associated Dead-Letter Queue (DLQ) using Terraform.

A DLQ is non-negotiable for any production messaging system. If a message fails to be processed multiple times (a "poison pill"), SQS will automatically move it to the DLQ, so it doesn't block the main queue and kill your consumer.

# terraform/sqs.tf

resource "aws_sqs_queue" "notification_dlq" {

name = "notification-queue-dlq"

}

resource "aws_sqs_queue" "notification_queue" {

name = "notification-queue"

delay_seconds = 0

max_message_size = 262144 # 256 KB

message_retention_seconds = 86400 # 1 day

visibility_timeout_seconds = 60 # Give our service 60s to process

redrive_policy = jsonencode({

deadLetterTargetArn = aws_sqs_queue.notification_dlq.arn

maxReceiveCount = 5 # After 5 failed attempts, send to DLQ

})

tags = {

Environment = "production"

ManagedBy = "terraform"

}

}

visibility_timeout_seconds: This is a critical setting. It's the amount of time a message is hidden from other consumers after one consumer picks it up. You must set this to be longer than the time your function takes to execute.redrive_policy: This connects our main queue to the DLQ. We're telling SQS to move a message to thenotification-dlqafter it has been received (and failed processing) 5 times.

The New, Lean 'Notification' Microservice

Now for the fun part: a brand-new, isolated service whose only job is to consume messages from the queue and send emails. It's small, easy to test, and can be deployed independently of the monolith.

// notification-service/consumer.js

import {

SQSClient,

ReceiveMessageCommand,

DeleteMessageCommand,

} from "@aws-sdk/client-sqs";

import { sendOrderConfirmationEmail } from "./emailService";

const sqsClient = new SQSClient({ region: "us-east-1" });

const queueUrl = process.env.NOTIFICATION_QUEUE_URL;

const processMessage = async (message) => {

console.log(`Processing message: ${message.MessageId}`);

const body = JSON.parse(message.Body);

// Basic validation

if (body.EventType !== "ORDER_CONFIRMED" || !body.payload.customerEmail) {

console.warn("Invalid or unhandled message type, skipping.");

return; // Don't throw an error, just skip it.

}

await sendOrderConfirmationEmail(body.payload);

console.log(`Email sent for order: ${body.payload.orderId}`);

};

const poll = async () => {

console.log("Polling for messages...");

const receiveCommand = new ReceiveMessageCommand({

QueueUrl: queueUrl,

MaxNumberOfMessages: 10,

WaitTimeSeconds: 20, // Enable long polling

MessageAttributeNames: ["All"],

});

try {

const data = await sqsClient.send(receiveCommand);

if (!data.Messages || data.Messages.length === 0) {

return;

}

for (const message of data.Messages) {

try {

await processMessage(message);

// CRITICAL: Delete the message after successful processing

await sqsClient.send(

new DeleteMessageCommand({

QueueUrl: queueUrl,

ReceiptHandle: message.ReceiptHandle,

})

);

} catch (err) {

console.error(`Error processing message ${message.MessageId}:`, err);

// Don't delete the message; let it become visible again for a retry

}

}

} catch (error) {

console.error("Error receiving messages from SQS:", error);

}

};

// Main loop

const startConsumer = () => {

setInterval(poll, 5000); // Poll every 5 seconds

};

startConsumer();

WaitTimeSeconds: 20: This enables SQS Long Polling. It's a massive cost-saver and reduces the number of empty requests your consumer makes.processMessage: Each message is processed individually. An error processing one message should not crash the entire consumer.DeleteMessageCommand: This is the most important line. You must explicitly delete the message after you've successfully processed it. If you don't, it will become visible again after thevisibility_timeoutexpires and be processed again.

Early in my career, we built a consumer without a DLQ. A buggy deploy started publishing malformed messages. The consumer would fetch a message, fail to parse it, crash, and restart. Because the message was never deleted, it would become visible again and the next instance of the consumer would pick it up and crash. This "poison pill" message caused a cascading failure that brought down our entire notification system for three hours until we manually purged the queue. Never again.

Completing the Strangulation

We've now been running this new service in production for a few weeks. Our dashboards show that all ORDER_CONFIRMED events are being processed successfully. The monolith is no longer responsible for sending these emails. The final, deeply satisfying step is to remove the old, dead code.

git rm monolith/services/OldMailchimpWrapper.js

git commit -m "feat(notifications): Decompose order confirmation emails to microservice"

There's no feeling quite like deleting legacy code you no longer need. We've successfully carved out a piece of our monolith, making the entire system more resilient, scalable, and easier to maintain.

Final Thoughts and Next Steps

This event-driven approach using SQS is a powerful and pragmatic way to start a microservices journey. It avoids the risk of a full rewrite by allowing for incremental, safe changes. We've improved resilience (an email service outage no longer blocks orders) and scalability (we can scale the notification service independently).

The next steps would be to add better observability with tools like DataDog or OpenTelemetry, and for more complex workflows, explore orchestration patterns like Sagas using AWS Step Functions. But for now, we've taken a huge step in the right direction.